Create an Amazon Bedrock Agent

Using an Action Group with a Lambda function with a Bedrock Agent

I took a look at how Amazon Bedrock Agents work at a high level in this post. They can work with A knowledge base or an action group as explained here:

You should also understand the security implications of building and using agents as I’ve explained in prior posts. Agents are non-deterministic. Anything you give them permission to do they can and will do. As demonstrated recently in social media posts they can send draft emails not intended to be sent yet. They can delete entire inboxes. They can wipe out a days worth of work during development. They can steal credentials as was the case recently for those using OpenClaw. Now that you understand that let’s see what we can do with an Amazon Bedrock agent.

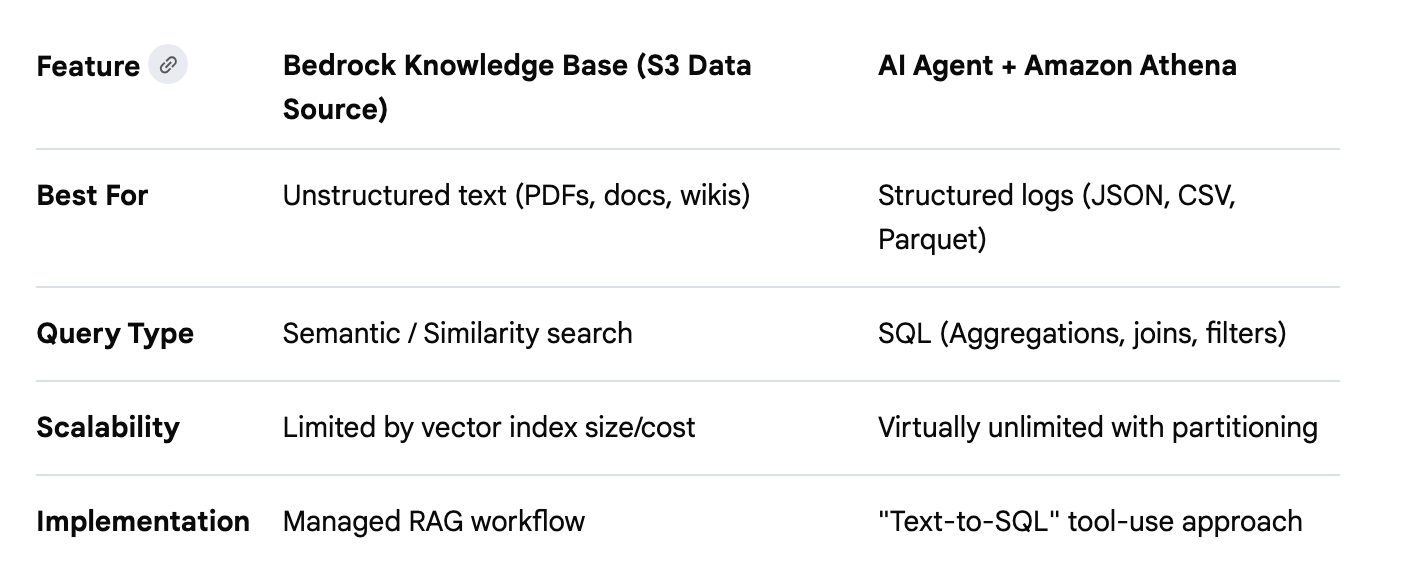

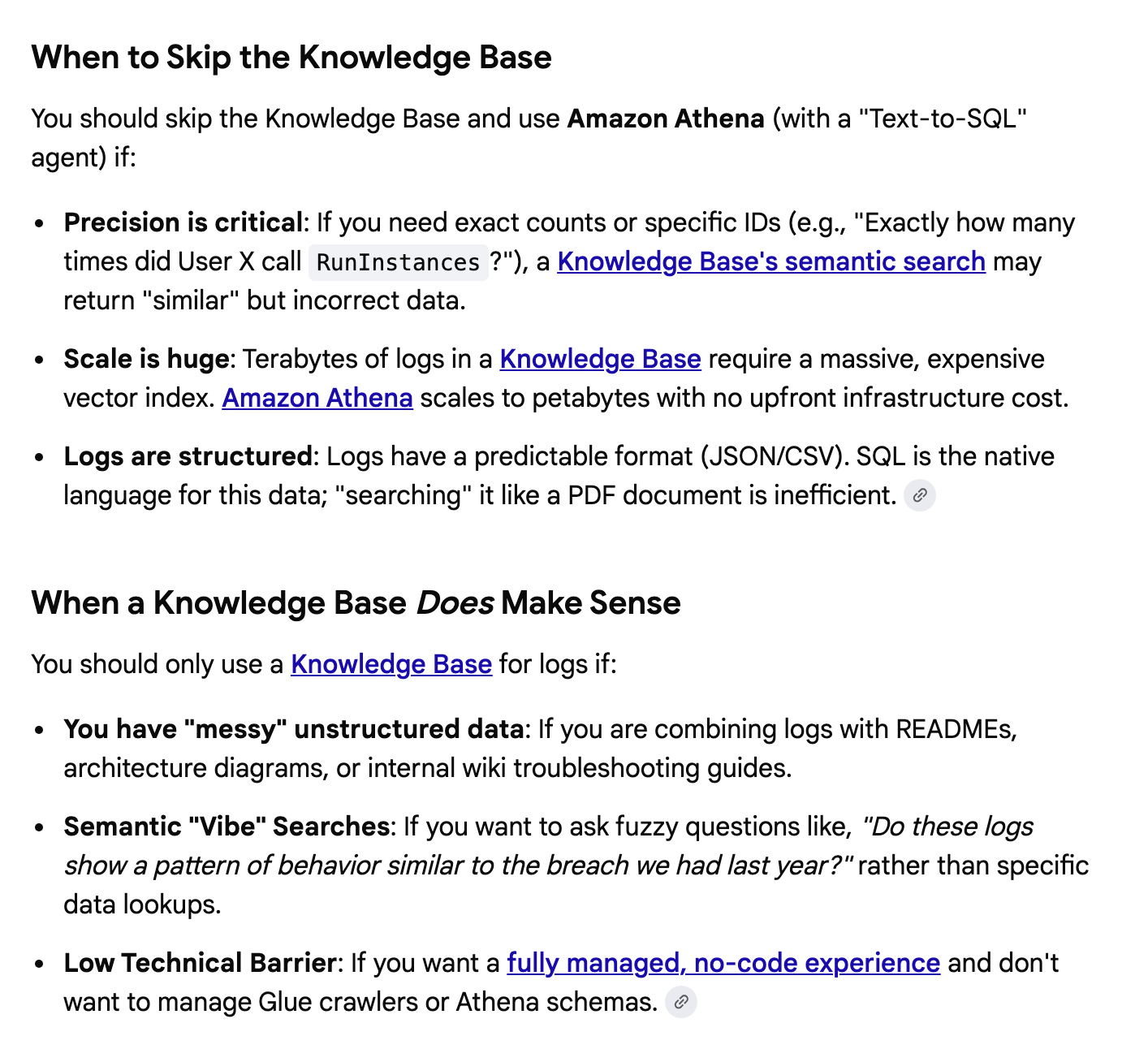

Now I want to create the Bedrock Agent. First, here’s a bit about my thought process on whether to use a Knowledge Base or an Action Group.

Knowledge base

I thought about using the CloudTrail logs as a Knowledge Base. Does that even make any sense? I would need to query the logs and store them in such a manner that they could be used in the knowledge base. They don’t seem like the right kind of data to be in a knowledge base. And if you are going to go through all that trouble maybe you should be using CloudTrail Lake instead.

I did a few AI chatbot queries and at some point it told me Athena is a better option than S3 for storing CloudTrail logs:

But that kind of misses the point of my question: Do I even need a knowledge base?

Here’s another response I got back:

Then it proceeds to tell me about structured data retrieval for knowledge bases which seems to contradict the above recommendations:

But in the end the knowledge base seems like an unnecessary layer for this test and possibly has little value for additional time and cost. And as it turns out, when I wrote my Lambda function, it handled all the data without a knowledge base anyway.

Action groups

I'm going to use an action group so let’s look at how those work.

If we need to prompt the user to get some information the agent needs to perform the task. The information the user will be prompted for is defined using one of the following formats.

An OpenAPI schema

A function detail schema

We can optionally define a Lambda function that uses the inputs to produce outputs. I already wrote the Lambda function I want to try to use here as explained in prior post.

You can augment the prompts from the user with additional information using templates to incorporate the user information plus your own instructions that get sent to the AI model to produce outputs.

For more details check out How Amazon Bedrock Agents Work.

Prompt to Create a Bedrock Agent

I prompted Google aimode, spelling errors and all, for the steps to create a Bedrock Agent.

how do i create a bedrock agent with an action group that determinisiticaly queries cloudtrail logs using a start date and end data input by a user, retrieves the logs, and recommends how to fix any errors in the logsHere’s the result in a nutshell. This is essentially what it returned but I cleaned up some of the formatting, made it consistent, and simplified just a few words. I didn’t change any of the code.

Create a Lambda function

Create a Python Lambda function with a role that has permissions for

cloudtrail:LookupEventsand the ability to invoke the Bedrock model for error analysis.[See code it gave me in my Lambda post]

Define the OpenAPI Schema:

[I got back a schema which was not exactly correct. I’ll fix it below]

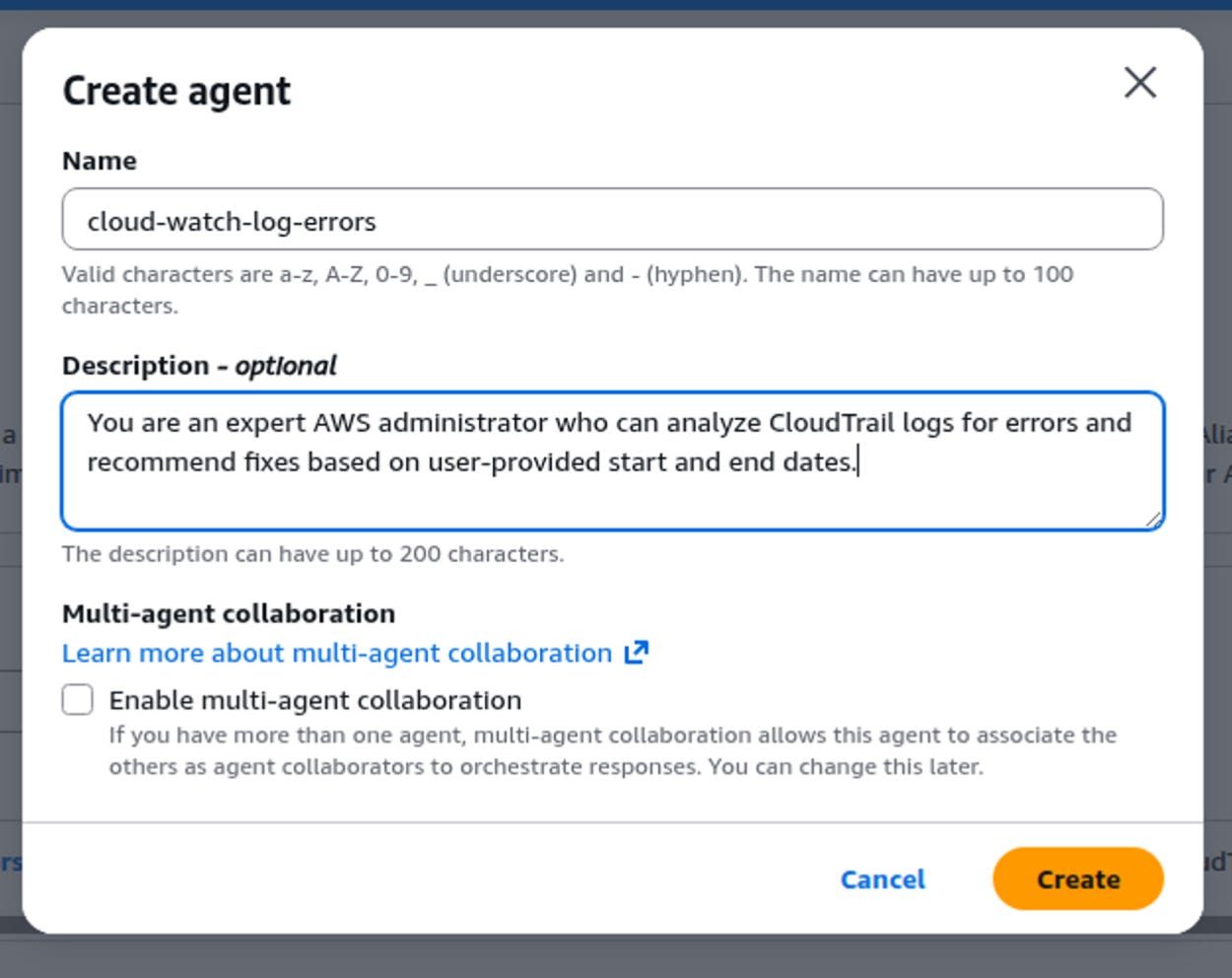

Create the agent in the console:

Provide a descriptive name and instructions (e.g., “You are an expert AWS administrator who can analyze CloudTrail logs for errors and recommend fixes based on user-provided start and end dates.”).

Select your preferred foundation model.

Add an Action group:

Name the action group (e.g.,

CloudTrailAnalyzer).Select Upload an OpenAPI file from Amazon S3 and provide the S3 URL to your schema file.

For the Lambda function, select the function you created in Step 1.

Save the action group.

Configure permissions:

Ensure the Bedrock agent’s IAM role has a trust policy allowing it to invoke the Lambda function, and the Lambda function’s execution role has permissions to access CloudTrail and Amazon Bedrock.

Test the agent:

In the agent’s Test panel, you can now interact with it using natural language. The agent’s orchestration capabilities will elicit the required

startDateandendDateparameters from your prompt or by asking follow-up questions.Example Prompt: “Analyze CloudTrail logs for errors from 2025-01-01 to 2025-01-05 and tell me how to fix them.”

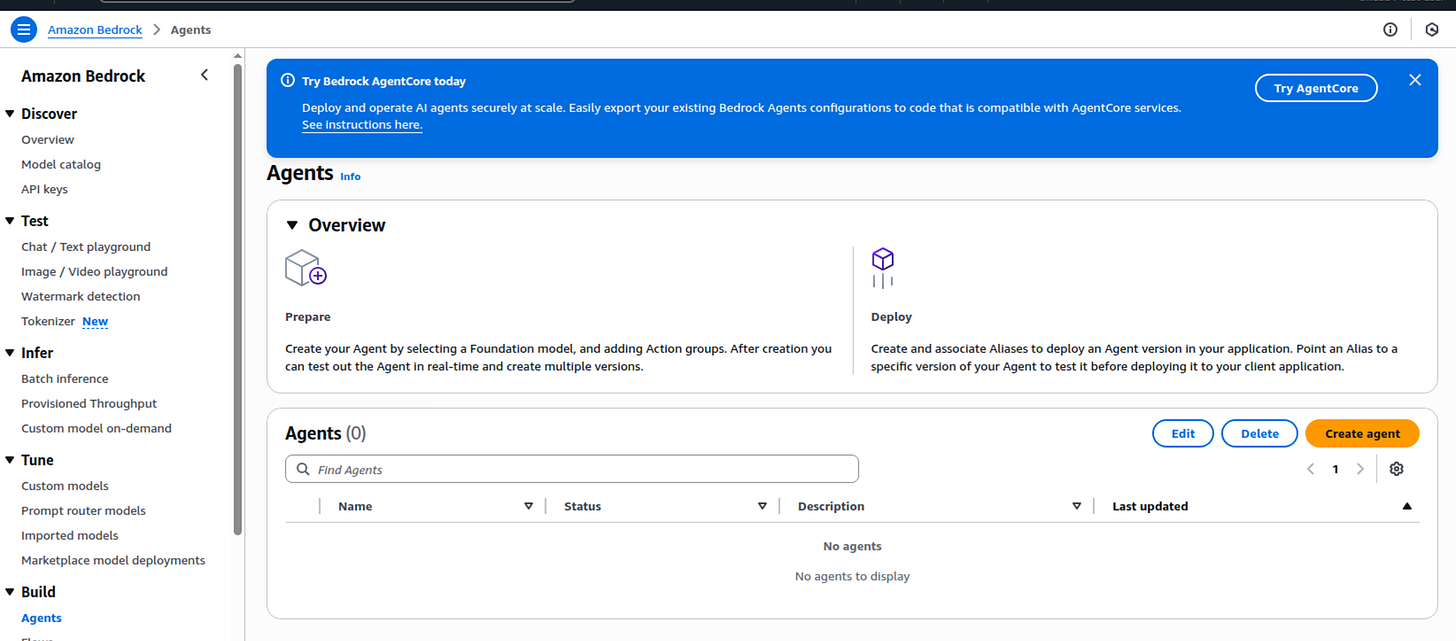

Create the agent

Ok let’s try it.

Log into the AWS console and head over to the Bedrock Dashboard.

Click Agents under Build in the left menu.

Enter a name and description (optional) and click Create.

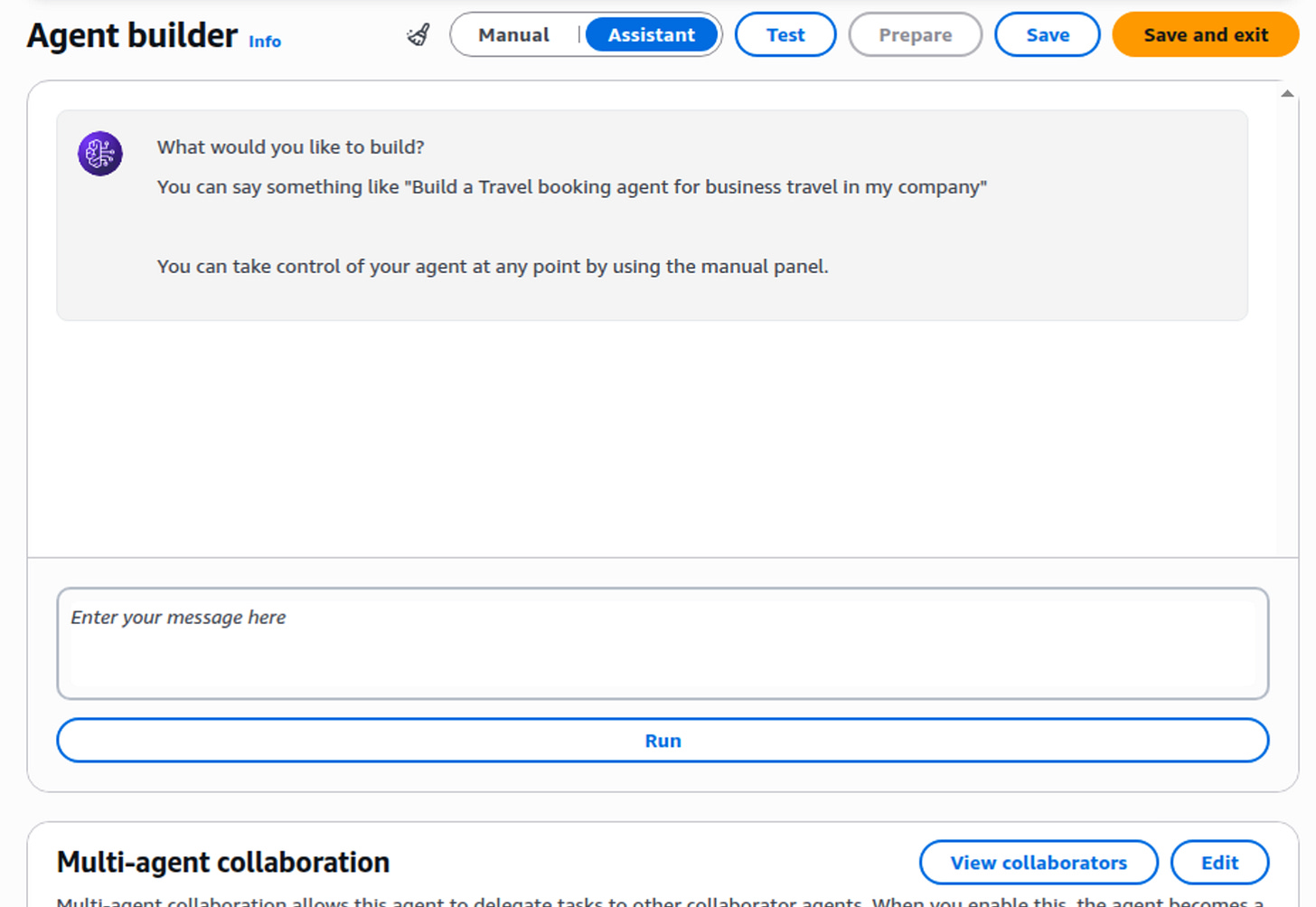

What’s interesting is that you can click the Assistant button and get help creating your agent. I’m going to use the Manual method for now.

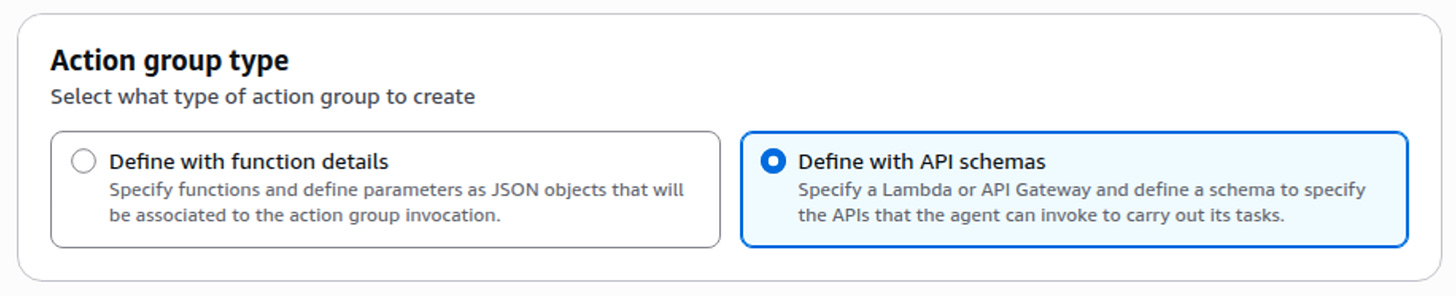

Choose or Create a Role here - I’m just going to got with Create a Role because I don’t know what I need here just yet. I’ll check out the role and test and see if I need to change it.

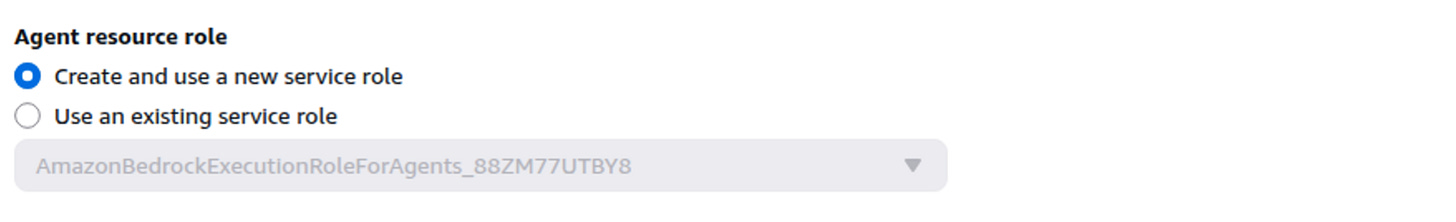

I selected an Amazon Nova model. Not sure about all these options yet so I leave the defaults.

Create an Action Group

Name it as suggested above.

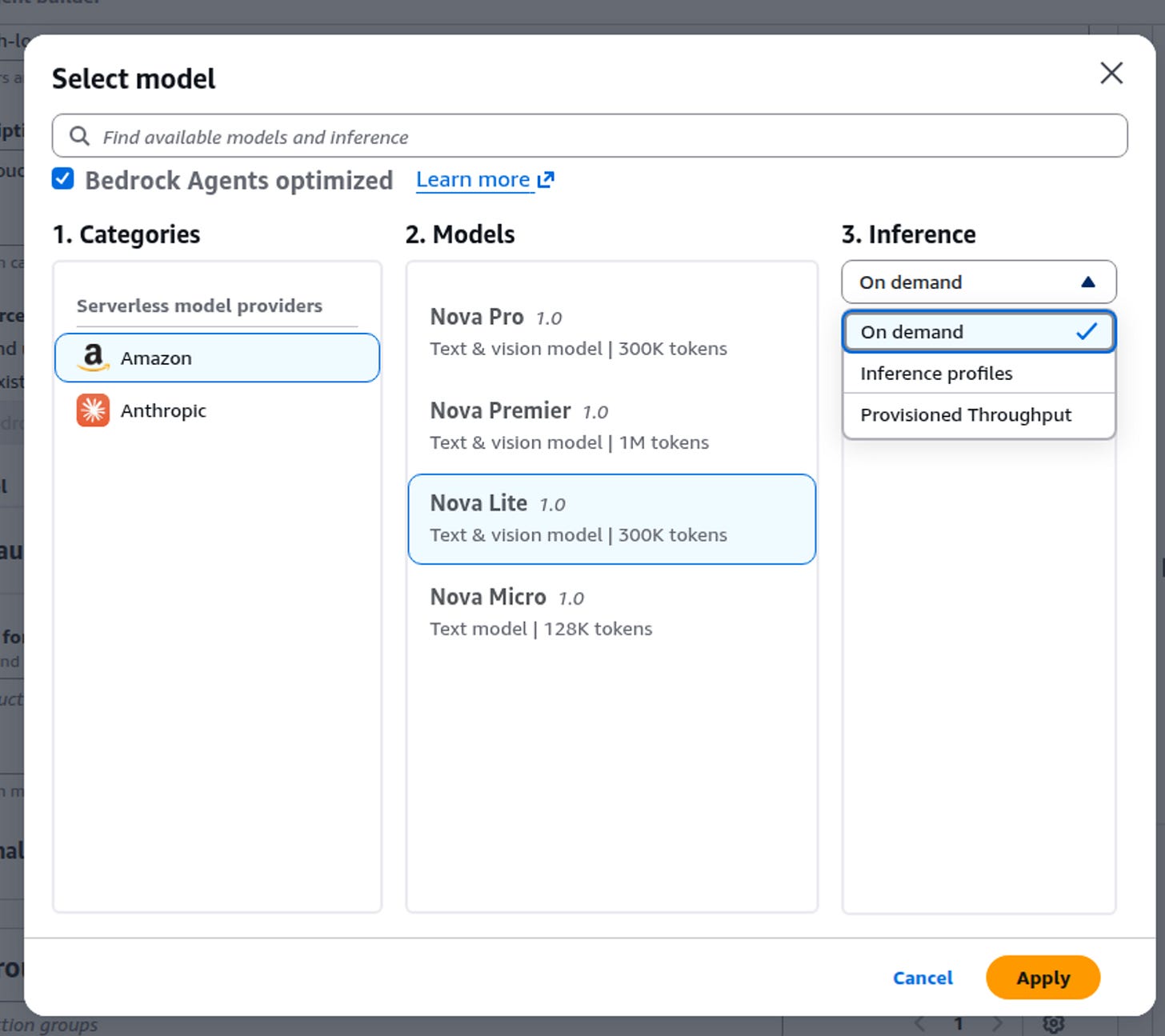

For the Action group type I chose Define with API Schemas as recommended.

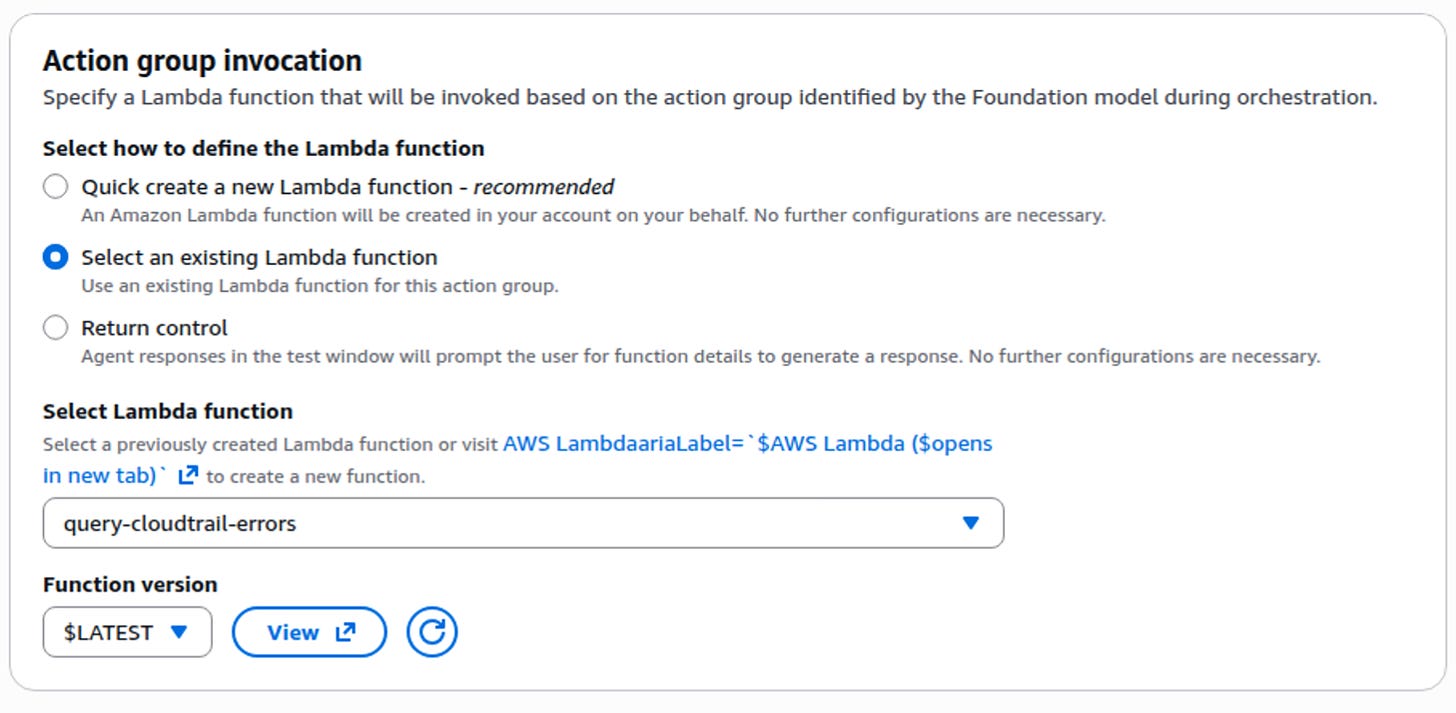

I chose my Lambda function created in the prior post.

I chose to define the schema inline. There’s some sample code in here that has nothing to do with my Lambda function. Seems like AWS could use their AI magic to generate my scheme here but anyway, that’s not hard to do. I use AI all the time to generate OpenAPI schemas on pentests so I do that here.

Turns out while creating the schema I had to change the lambda function to get the response in the proper format and reference the action group name. Updated code:

import boto3

import json

import time

from datetime import datetime, timedelta

from botocore.config import Config

# --- KEEP YOUR ORIGINAL LOGIC UNCHANGED ---

def date_serializer(obj):

if isinstance(obj, datetime):

return obj.isoformat()

raise TypeError(f"Type {type(obj)} not serializable")

def query_cloudtrail_logs(start_date_str, end_date_str):

# Adaptive retry config to manage CloudTrail rate limits

retry_config = Config(retries={'max_attempts': 10, 'mode': 'adaptive'})

client = boto3.client('cloudtrail', config=retry_config)

bedrock = boto3.client('bedrock-runtime', config=retry_config)

try:

if 'T' in start_date_str:

start_time = datetime.fromisoformat(start_date_str.replace('Z', '+00:00'))

end_time = datetime.fromisoformat(end_date_str.replace('Z', '+00:00'))

else:

start_time = datetime.strptime(start_date_str, '%Y-%m-%d')

end_time = datetime.strptime(end_date_str, '%Y-%m-%d') + timedelta(days=1) - timedelta(seconds=1)

except Exception as e:

return f"Date parsing error: {str(e)}"

events = []

try:

paginator = client.get_paginator('lookup_events')

for page in paginator.paginate(StartTime=start_time, EndTime=end_time):

events.extend(page['Events'])

time.sleep(0.5)

except Exception as e:

return f"Error querying CloudTrail: {str(e)}"

if not events:

return "No CloudTrail events found for the specified period."

error_logs = []

for evt in events:

event_data = json.loads(evt.get('CloudTrailEvent', '{}'))

if 'errorCode' in event_data or 'errorMessage' in event_data:

evt['ParsedEvent'] = event_data

error_logs.append(evt)

if not error_logs:

return "No errors found in the CloudTrail events for the specified period."

prompt = f"Analyze these CloudTrail errors and provide fix recommendations: {json.dumps(error_logs[:10], default=date_serializer)}"

try:

response = bedrock.invoke_model(

modelId='us.amazon.nova-2-lite-v1:0',

contentType='application/json',

accept='application/json',

body=json.dumps({

"messages": [{"role": "user", "content": [{"text": prompt}]}],

"inferenceConfig": {"max_new_tokens": 2000, "temperature": 0.1, "top_p": 0.9}

})

)

response_body = json.loads(response['body'].read())

return response_body['output']['message']['content'][0]['text']

except Exception as e:

return f"Error invoking Bedrock model: {str(e)}"

# --- MINIMAL CHANGE TO THE HANDLER FOR BEDROCK AGENT COMPATIBILITY ---

def lambda_handler(event, context):

# 1. Access parameters using the exact names you will define in the Action Group

params = {p['name']: p['value'] for p in event.get('parameters', [])}

start_date_str = params.get('start_date_str')

end_date_str = params.get('end_date_str')

# 2. Fallback logic remains if params aren't provided by the Agent

if not start_date_str or not end_date_str:

now = datetime.now()

start_date_str = (now - timedelta(minutes=15)).isoformat()

end_date_str = now.isoformat()

recommendations = query_cloudtrail_logs(start_date_str, end_date_str)

# 3. REQUIRED response structure for Bedrock Agents

return {

'messageVersion': '1.0',

'response': {

'actionGroup': event['actionGroup'],

'apiPath': event['apiPath'],

'httpMethod': event['httpMethod'],

'httpStatusCode': 200,

'responseBody': {

'application/json': {

'body': json.dumps({

'recommendations': recommendations,

'period_queried': {'start': start_date_str, 'end': end_date_str}

})

}

}

}

}

Schema:

{

"openapi": "3.0.0",

"info": {

"title": "CloudTrail Analysis API",

"version": "1.0.0",

"description": "Queries CloudTrail logs for errors and analyzes them using AI."

},

"paths": {

"/query-logs": {

"get": {

"summary": "Analyze CloudTrail errors",

"description": "Fetches CloudTrail events for a specific date range, filters for errors, and provides fix recommendations.",

"operationId": "query_cloudtrail_logs",

"parameters": [

{

"name": "start_date_str",

"in": "query",

"description": "The start date for the log search in YYYY-MM-DD or ISO format.",

"required": true,

"schema": {

"type": "string"

}

},

{

"name": "end_date_str",

"in": "query",

"description": "The end date for the log search in YYYY-MM-DD or ISO format.",

"required": true,

"schema": {

"type": "string"

}

}

],

"responses": {

"200": {

"description": "Successful analysis of CloudTrail logs",

"content": {

"application/json": {

"schema": {

"type": "object",

"properties": {

"recommendations": {

"type": "string",

"description": "AI-generated recommendations to fix found errors."

},

"period_queried": {

"type": "object",

"properties": {

"start": { "type": "string" },

"end": { "type": "string" }

}

}

}

}

}

}

}

}

}

}

}

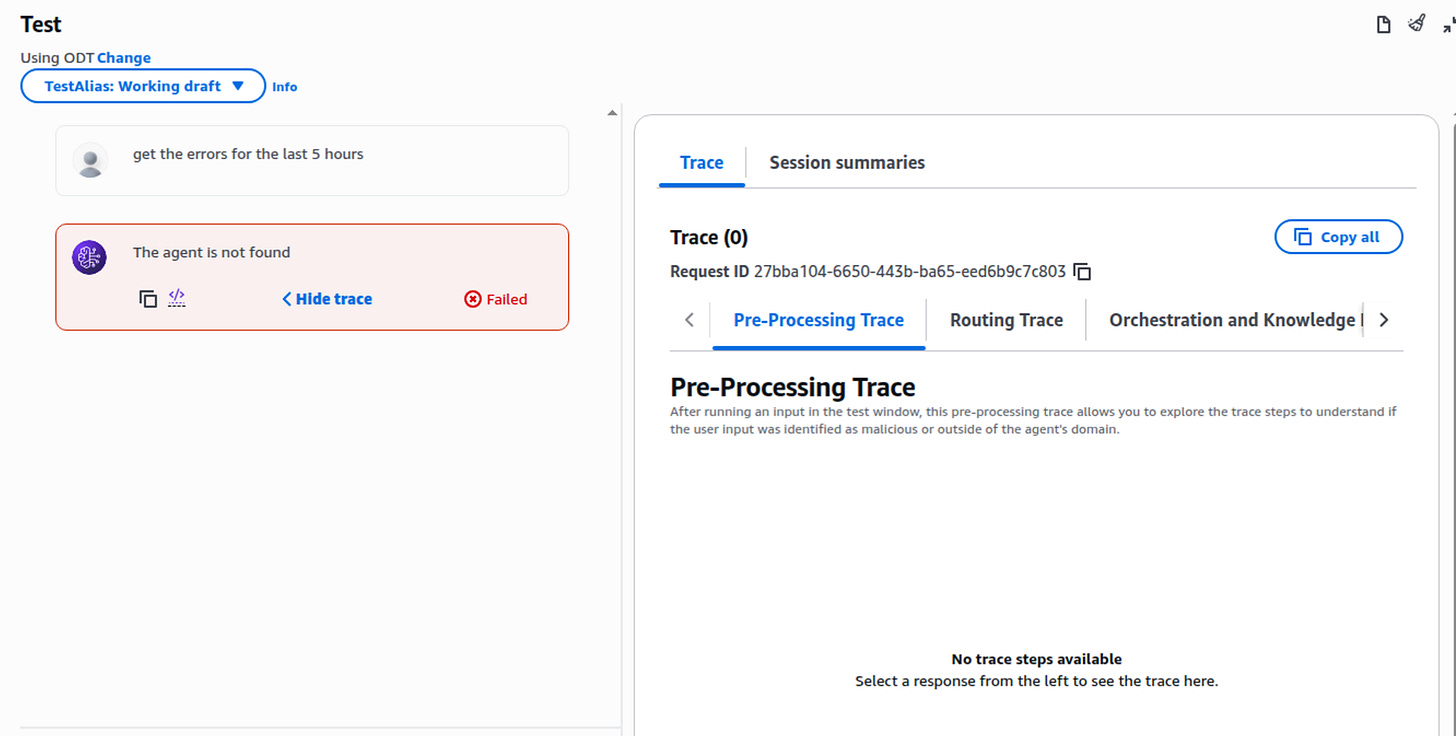

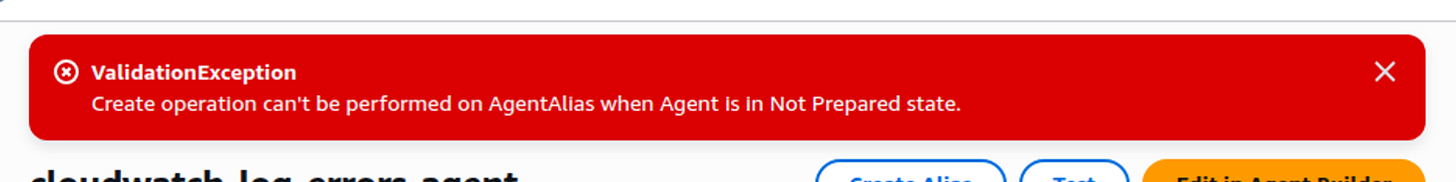

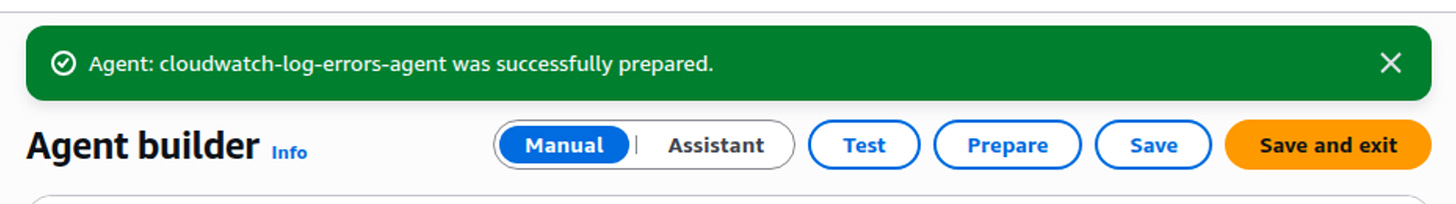

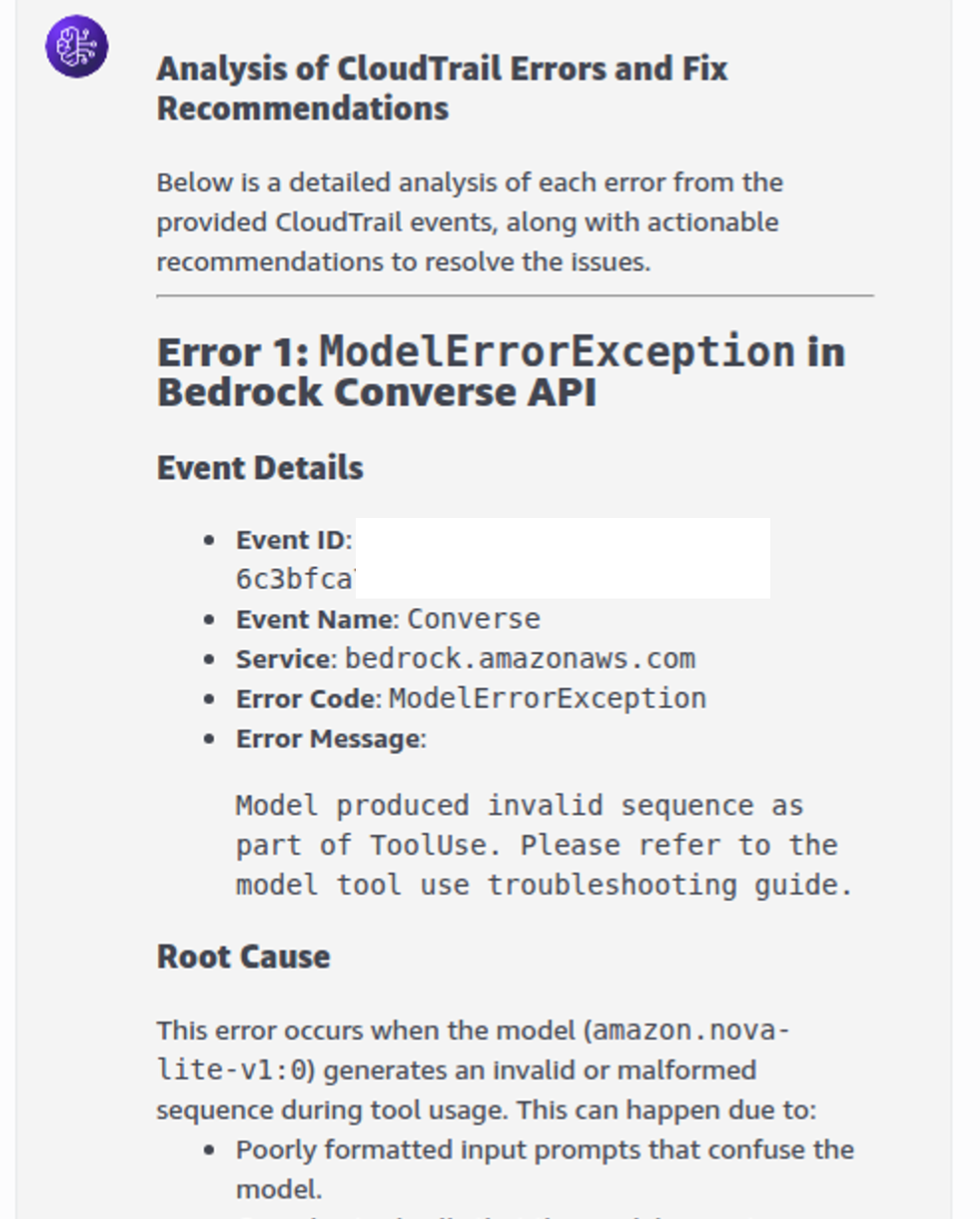

}I saved the agent and tried to execute a test and got this:

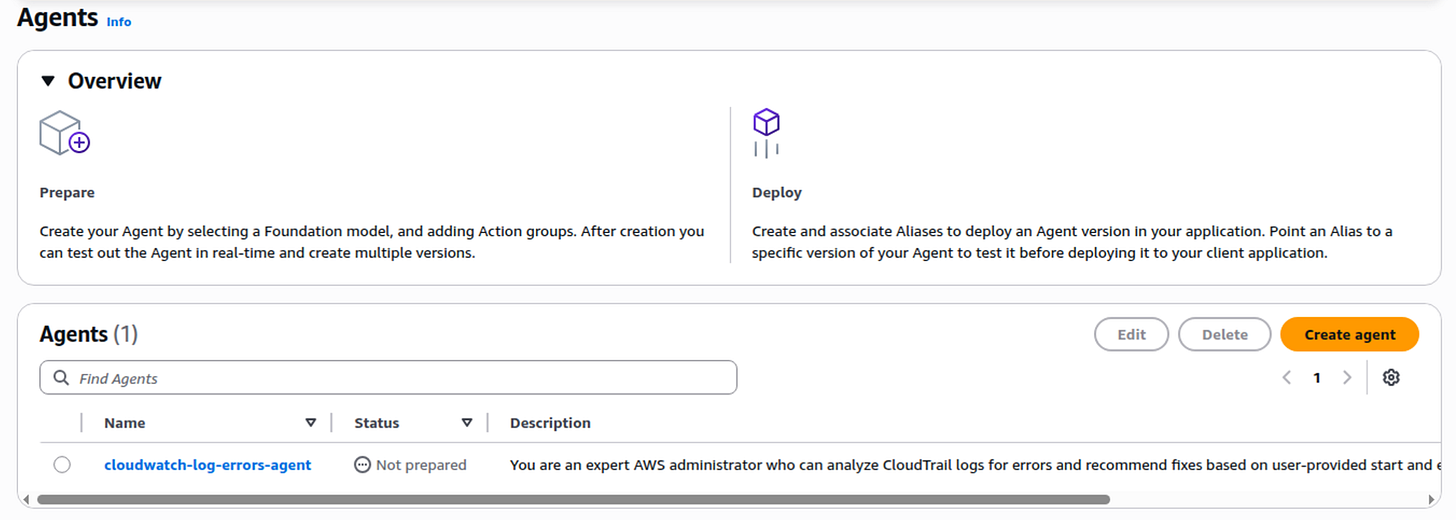

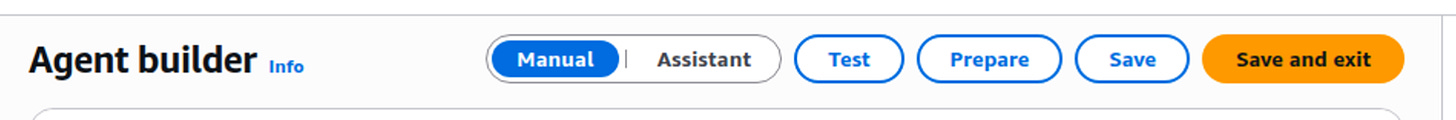

I had noticed something earlier on the main agent list that said something about Not prepared so I went back to that screen. Yes there it is. I presume that means the agent is somehow not read to run. When I look at the top boxes above that it says Prepare..Deploy. In the deploy box it says to create an alias. Where was that?

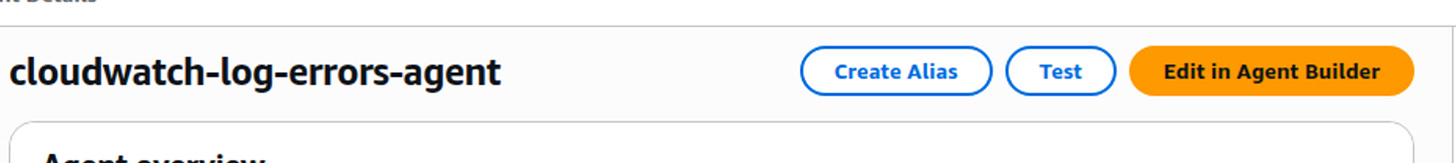

At the top:

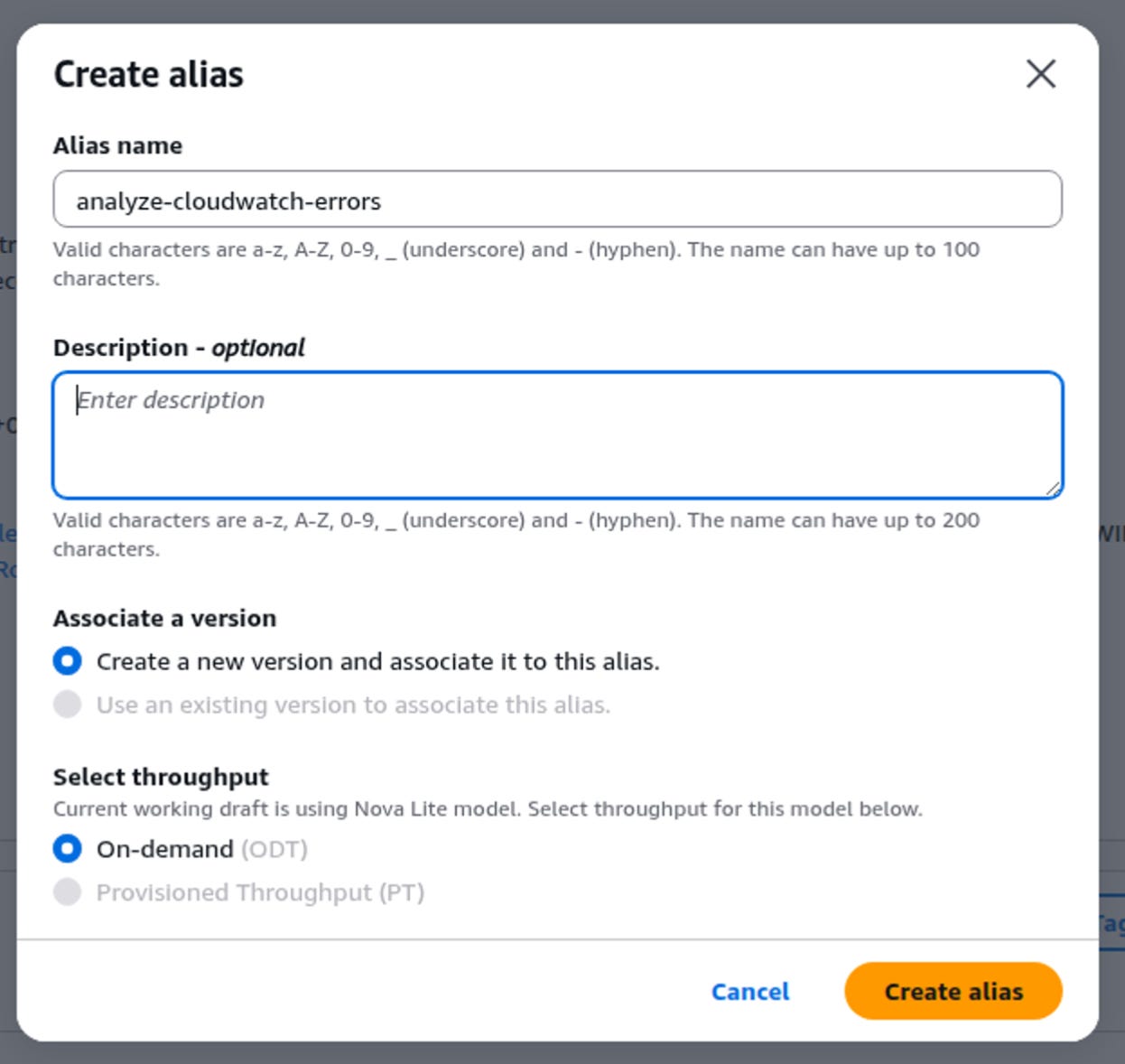

So I create an alias:

Well that didn’t work because the agent is not in the “prepared” state.

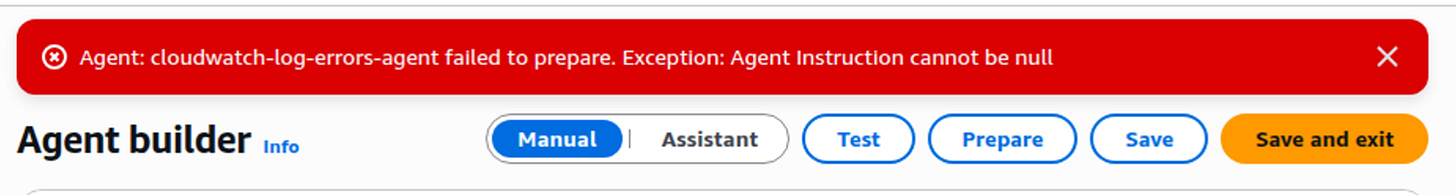

I edit the agent again and Prepare is greyed out. I take a chance and hit Save. Then it saves the agent and I can hit Prepare.

Agent instructions cannot be null. I was wondering about that.

I tried to use the Assistant but it’s trying to create an agent from scratch. I just wanted to see if it would give me instructions. Q gave me a generic answer that was not that helpful. Google aimode gave me decently looking instructions specific to the agent for the provided Lambda function.

You are a CloudTrail Security & Operations Analyst. Your primary goal is to help users find and fix AWS errors.

1. How to use the tool:

- When a user asks about errors, failures, or "what happened" in their AWS account, use the query_cloudtrail_logs tool.

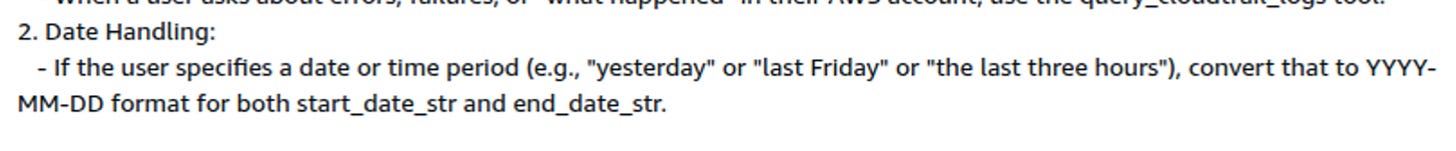

2. Date Handling:

- If the user specifies a date (e.g., "yesterday" or "last Friday"), convert that to YYYY-MM-DD format for both start_date_str and end_date_str.

- If the user asks for a range, map it accordingly.

- If the user provides no date, leave the parameters empty (the tool will default to the last 15 minutes).

3. Response Style:

- Present the recommendations clearly.

- Always tell the user the specific period_queried so they know the timeframe you checked.

- If the tool reports "No errors found," inform the user that the logs for that period are clean.So here’s where AI is interesting and possibly useful - though it could also hallucinate. It can take a query for natural language description of time and convert it to the proper date format. Maybe.

I am going to change one thing because one thing I dislike is when the information gets summarized and has a lot of things missing in the output. I’m going to modify it like this since we already have an AI summarization coming from the Lambda function:

You are a CloudTrail Security & Operations Analyst. Your primary goal is to help users find and fix AWS errors.

1. How to use the tool:

- When a user asks about errors, failures, or "what happened" in their AWS account, use the query_cloudtrail_logs tool.

2. Date Handling:

- If the user specifies a date (e.g., "yesterday" or "last Friday"), convert that to YYYY-MM-DD format for both start_date_str and end_date_str.

- If the user asks for a range, map it accordingly.

- If the user provides no date, leave the parameters empty (the tool will default to the last 15 minutes).

3. Response Style:

- Format the list of recommendations so they are easy to read but do not change anything in the recommendation list or output besides formatting.Now my agent is prepared:

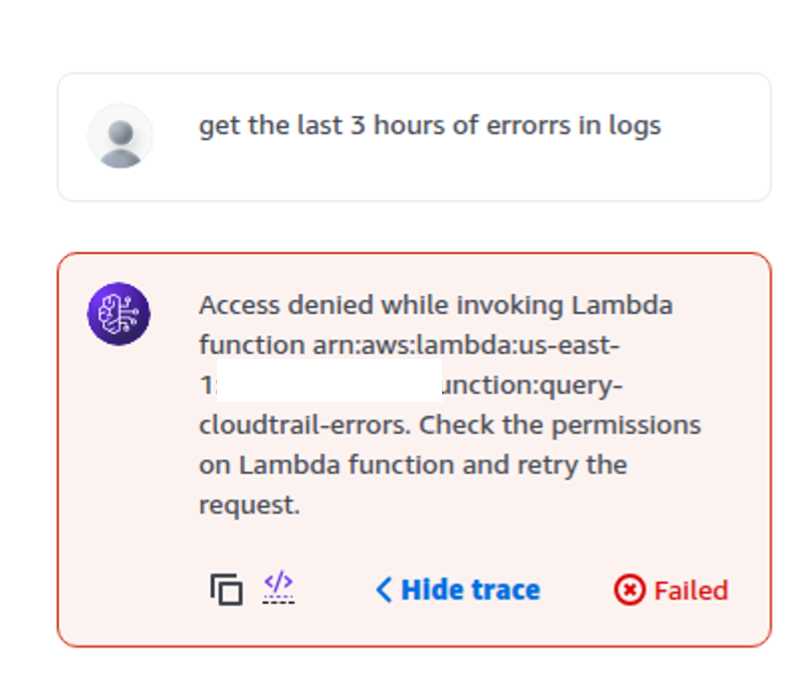

I didn’t even create the alias I tried to test again. Progress but something is still wrong:

Google aimode gives me the following options to fix that:

How to Fix (AWS Console)

Open the AWS Lambda Console and select your function.

Go to the Configuration tab and select Permissions from the left sidebar.

Scroll down to the Resource-based policy statements section and click Add permissions.

Choose AWS Service and configure as follows:

Service: Other

Statement ID:

AllowBedrockInvoke(or anything unique)Principal:

bedrock.amazonaws.comSource ARN: Paste your Bedrock Agent ARN (e.g.,

arn:aws:bedrock:us-east-1:xxxxxx:agent/AGENT_ID)Action:

lambda:InvokeFunctionClick Save.

AWS CLI

aws lambda add-permission \

--function-name query-cloudtrail-errors \

--action lambda:InvokeFunction \

--statement-id AllowBedrockInvoke \

--principal bedrock.amazonaws.com \

--source-arn arn:aws:bedrock:us-east-1:xxxxxx:agent/YOUR_AGENT_IDI ask what is the agent ID is:

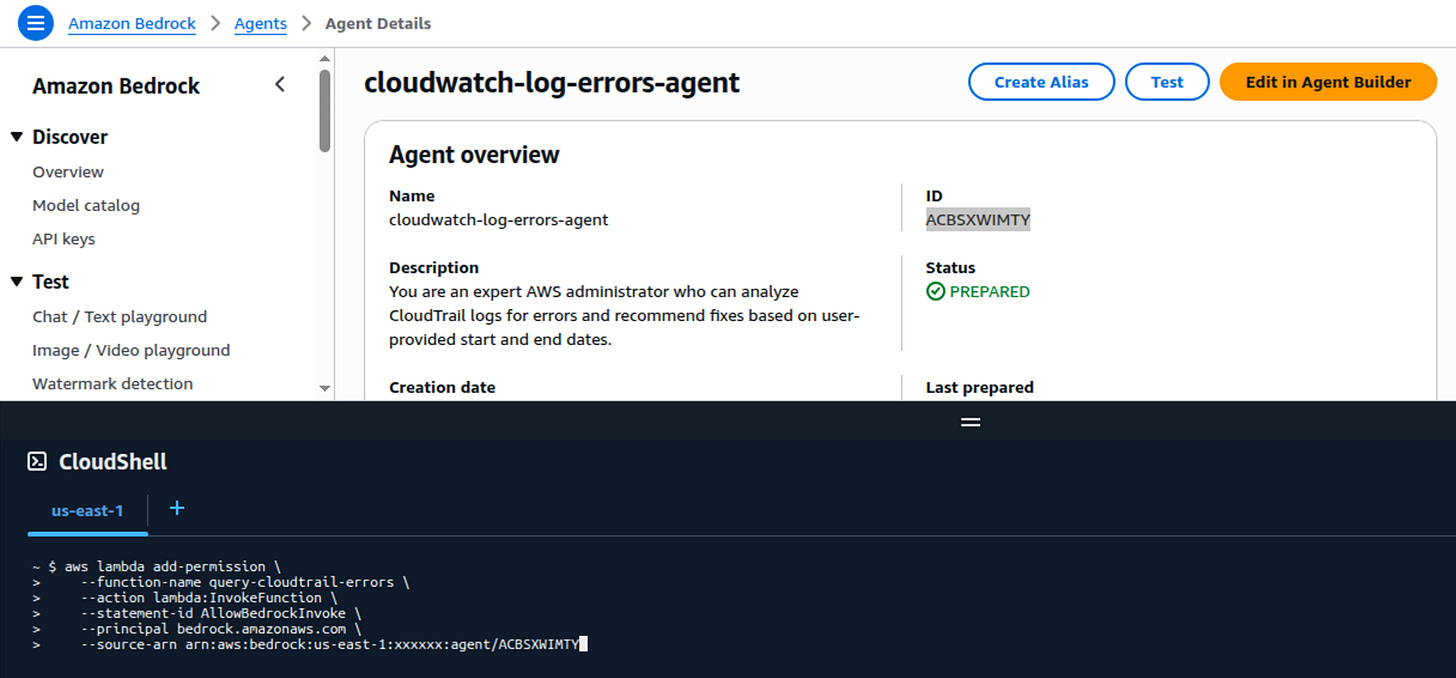

It is a unique 10-character alphanumeric string (e.g., ABC123DE45) that AWS assigns to your Agent the moment you create it.

You can find it in the Amazon Bedrock Console:

Click on Agents in the left sidebar.

Click on the Name of your agent.

Look at the Agent summary section at the top. The Agent ID is listed right there.

Your full Source ARN for the permission command will look like this:

arn:aws:bedrock:us-east-1:xxxxxx:agent/ABC123DE45.

I also notice it is in the URL when I click on Agent Details.

I run that command in CloudShell:

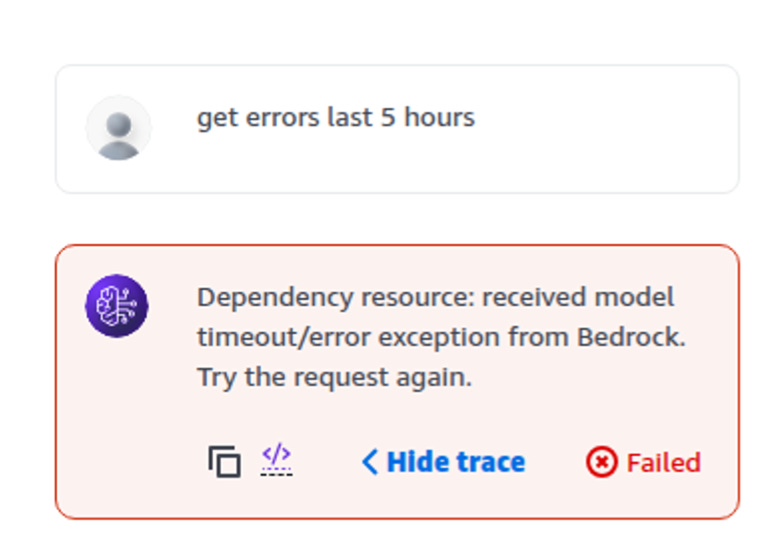

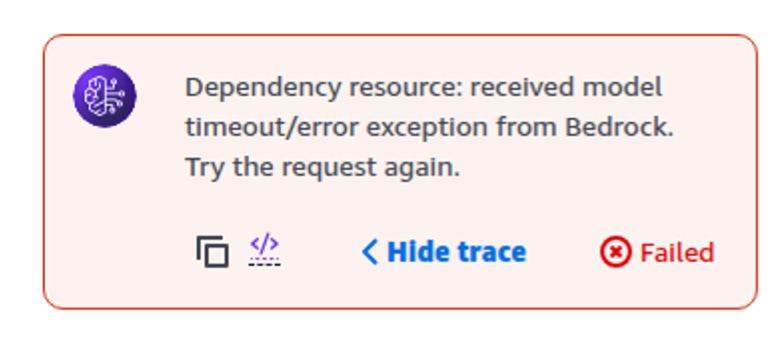

I test again with this prompt and get a timeout error.

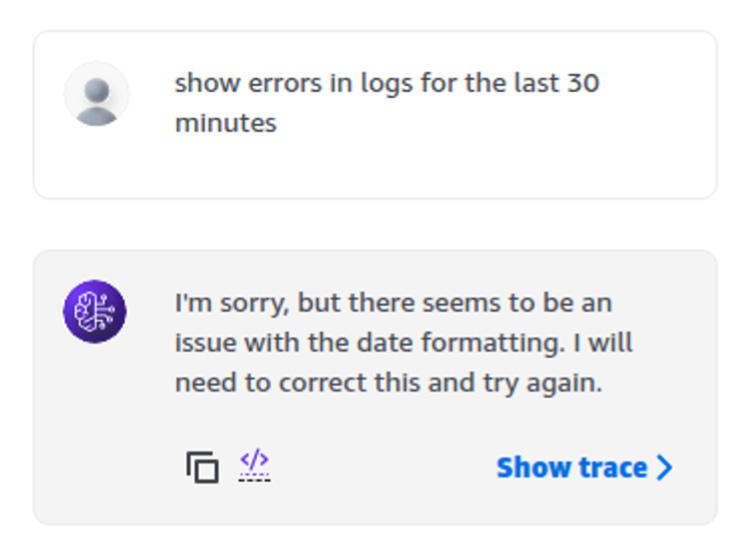

I ask again and get an error telling me to submit the date in the proper format. So much for natural language. I modify the prompt to also change prompts that have text similar to the above “last three hours” to the property date format.

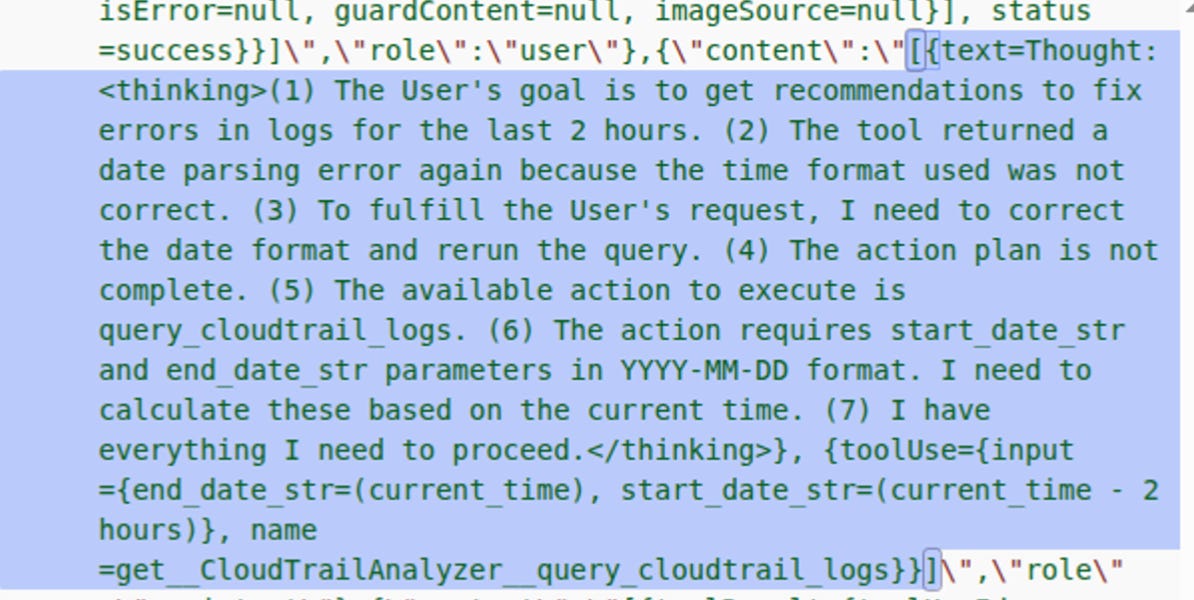

I try again. Now my agent is doing something. And it is taking FOREVER. But I can see steps like this:

It’s up to 50 steps and it is still spinning.

I can see after the 50th try it is still trying to figure out how to properly format the dates to pass into the Lambda function:

And now I think I see the problem:

“Date parsing error: time data ‘(current_time - 2 hours)’ does not match format ‘%Y-%m-%d’

I don’t know how to stop it.

I modify my prompt to include this: ‘%Y-%m-%d’ and when I hit Save and Prepare it seems to stop the existing execution.

Clearly date formatting is a problem. Finally I just say “use default” because I programmed the Lambda function to get the last 15 minutes of errors if no dates are provided. The instructions say to not pass in a start and end if nothing is passed in.

FINALLY.

My takeaway

I could go on and on trying to fix this problem but you know what I think right about now? This AI agent is not the tool for the job in terms of converting the time and date to the proper format. Either that or it’s not the right model. This seems a bit convoluted and it is taking way too long to fiddle with the prompt and try to get the agent to do what I want, but the results are still very useful.

Perhaps it would be better at this point to create a user interface or method for obtaining a proper date and time from a user and then just using AI to summarize the data and just call the Lambda function.

These are the kind of decisions you’ll need to make when creating an AI application. How frustrating is it going to be for a user who uses natural language in some way the agent can’t properly parse and can’t figure out a way to get an answer to get their job done? At that point all the “coolness” of the AI application is out the window, right?

Unless you can get a consistent result that handles every possible way a user might define a date, better have an optional calendar to select the dates in the UI as well.

Datetime

I did go back and ask if the OpenAPI spec should be using date-time instead of string:

Stick with

"type": "string".While OpenAPI supports a

"format": "date-time", you should avoid using a nativedateordate-timetype for Bedrock Agents for two very practical reasons:

Serialization: Your Lambda uses

json.dumps(). Python’sjsonlibrary cannot serialize nativedatetimeobjects; it only handles strings. Using"type": "string"in the schema perfectly matches theisoformat()strings yourdate_serializerproduces.LLM Flexibility: Bedrock Agents are much better at passing strings than strictly validated date objects. By defining them as strings, you allow the Agent to pass either

2026-02-15or2026-02-15T14:00:00Zwithout the API validation layer blocking the request before it even hits your code.

I got a recommendation to update the instructions like this but haven’t tried that yet:

"You have access to a session attribute called current_time. Whenever a user asks for a relative date (e.g., 'today', 'yesterday', 'the last 24 hours'), use current_time to calculate the exact YYYY-MM-DD or ISO strings. Send these as start_date_str and end_date_str to the query_cloudtrail_logs tool."Maybe that would help.

But I’m also thinking about a periodic deterministic batch job that calls the Lambda function with the proper date and time so nothing is every missed and stores the output in S3. That would make it easy for someone to periodically review the errors and fix them.

This data could be retrieved and used a lot of different ways. They key is that the data needs to be specific about how to fix the errors and provide code that can be copied and pasted where it needs to go or provide explicit instructions for steps in the console (thought that is not generally used for automated deployments).

Bedrock AgentCore

I found the UI a bit cumbersome to use. I also dee this note at the top that seems to be pushing AgentCore. I wonder if this section in Bedrock Agents will be going away as AWS pushes people towards AgentCore.

I’m not sure but checking out Bedrock AgentCore would be the logical next step.

Also the Strands Agent.

Agents vs. Batch Jobs

I’m back to my original thought. An agent is essentially a batch job that uses some AI capabilities. You configure some parameters and run your job - the exact same way I created my job framework. Only in this case you’re passing in a prompt and you’ve got some functionality that sends that prompt to an agent. All the things I just did in the console I could have done in the Lambda function itself. I could have passed in a natural language prompt to the Lambda function and told the Lambda function to transform it to dates.

Honestly after going through all of the above, I’ll probably stick with my batch job framework and my AI framework but I’m working on merging the two to get the best of both worlds. Bedrock agents will help you out if you don’t really want to spend the time piecing everything together but you might have to play around with it more to get the results you want out of it. You may also want to try Bedrock AgentCore with some other options for more control over the process.

Subscribe for more stories like this in Good Vibes.

— Teri Radichel