Recently I’ve been working on my multiple agent framework and trying out some of the different models. The main thing I want to emphasize in this post is that after my recent experiences the past few days, you definitely want to test out what you’re doing with multiple models to get the one that works best for the task at hand.

I’ve been using Kiro CLI for programming with an agent I configure differently than the default agent. I originally showed how to use a custom agent with Amazon Q but haven’t changed my code too much to work with Kiro because it is basically Amazon Q rebranded. I have the initial script I used to deploy a custom Kiro CLI agent in this post and a link to the code on GitHub.

I prefer the CLI over an IDE for these reasons:

https://medium.com/cloud-security/cli-vs-ide-for-ai-coding-61cb0bc2db28

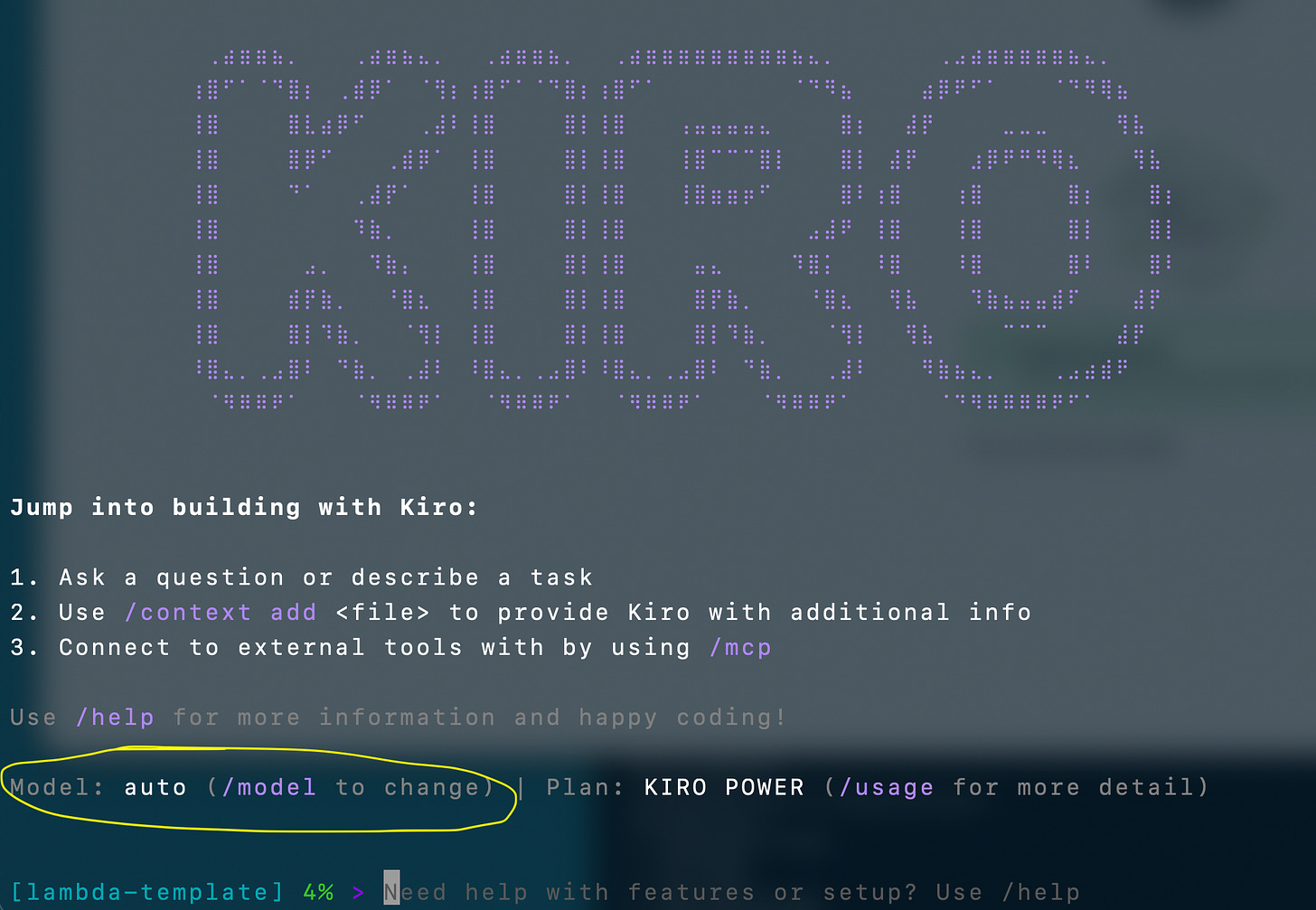

When you start Kiro CLI you can see which model it’s using.

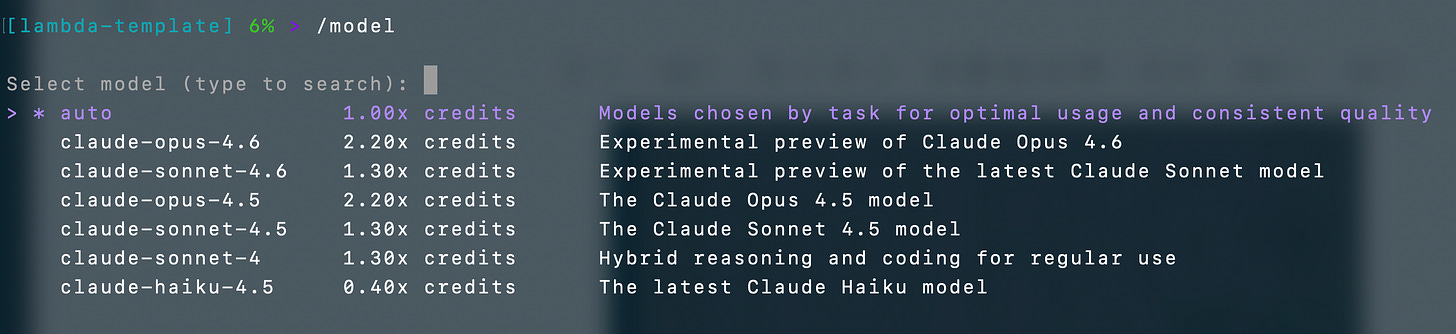

Type /model if you want to switch to a different model:

Kiro starts in auto mode that is supposed to select the right model for the prompt you’re using. However, I have consistently found that I will get frustrated going in circles in that mode so I almost immediately switch to another model. As you can see the different models cost different amounts of tokens. Mostly I’ve been using the latest claude-opus-4.6 model.

Configure the default model used by your Kiro chat agent using this command:

kiro-cli settings chat.defaultModel "claude-3-sonnet"It may seem like the cheaper model can save you money, but if you have to prompt it 20 times to get the right result, did you really save tokens (money)? If it completely messes up your code when you ask it to make a change does that save you money when you have to try to get it back to a working state?

Try the different models and figure out which ones work for different tasks and optimize results and save tokens, time, or both. I have consistently discovered that while writing code and struggling to get something working in auto mode when I switch to another model I almost immediately get better results.

Those are just the models that happen to be available in Kiro CLI, not all the models available on AWS. You have many more models to choose from if you use Amazon Bedrock. I explain the different AWS generative AI services in an earlier post that includes a screen shot of the Bedrock model catalog.

I showed how to use Amazon Bedrock in a Lambda function in this post - and you can switch to whatever model you want that is available in Bedrock in your Lambda function using the code I provided.

I was reading something interesting recently after the US government cut ties with Anthropic and switched to OpenAI. Apparently guardrails are baked into Anthropic models whereas OpenAI adds guardrails after the fact.

https://defensescoop.com/2026/02/27/pentagon-threat-blacklist-anthropic-ai-experts-raise-concerns

Depending on what you are trying to do, the restrictions integrated into the model may make your job more difficult. I have found that it’s not too hard to get around certain restrictions because of the fact that AI is non-deterministic.

Although companies are trying to define how their models can and cannot be used, the fact is that attackers will get around any guardrails in the model itself. I don’t think that placing these restrictions on people trying to perform security work is really that helpful because it blocks legitimate security professionals and makes their job harder. Meanwhile malicious actors will just use it for that purpose anyway or find some other source.

I’m not sure if the answer is to just let anyone ask anything or not. I just know that it is frustrating in my case when I’m trying to use the tools to better protect customers via a penetration test and I can’t get the answer I need because the tool is telling me I’m doing something malicious and unlawful. I’m not. I am also aware that there is another model out there that will happily provide the answer I’m seeking.

It’s a very complicated decision, however, because you have kids using these chatbots and asking all kinds of things. In some cases the wrong answer can be detrimental to someone’s well being. I suppose this same problem has existed for anyone using Google search for as long as that has been around and bulletins boards before that. Seek and ye shall find…

In any case, different models have different capabilities and it’s a good idea to try out the different options when you’re writing code, processing data, or doing research and compare the results. Consider how many times you have to ask to get a result and compare that to the cost of the tokens you’re spending.

I’m also using a lot of deterministic code, templates, and a multi-agent framework to obtain better results. I finally got my agents working autonomously while I was sleeping the other night and producing pretty good results. More on that later.

Subscribe for more stories like this and follow Good Vibes.

— Teri Radichel